Biochemistry Instruments and Reagents

Clinical chemistry augmented with AI is the future

Artificial intelligence (AI) applications are an area of active investigation in clinical chemistry, and are expected to transform the practice of the clinical chemistry laboratory.

The Covid-19 epidemic caused significant hurdles for the healthcare sector. But when infection spread, more clinical chemistry testing for SARS-CoV-2 virus carriers was carried out. Tests, including procalcitonin, albumin, lactate dehydrogenase, and the C-reactive protein (CRP), as well as less common ones like lactate dehydrogenase and procalcitonin, were used to assess organ involvement and disease severity, and to estimate the probability of morbidity and mortality.

Moreover, during the pandemic, the clinical chemistry tests and the serological testing assisted in keeping track of the affected people’s general health. The pandemic increased the use of these tests and contributed significantly to the market’s expansion.

The global clinical chemistry market is estimated at USD 13.6 billion in 2022, set to reach USD 17.1 billion by 2027, at a significant CAGR of 4.69 percent.

Fully automated clinical chemistry testing is becoming popular due to increased testing volume and quicker processing times provided by them. Additionally, with advanced processing, automated clinical chemistry testing boosts user protection from biohazards, and decreases the possibility of cross-contamination, due to which fully automated clinical chemistry testing will continue to expand during the forecast period.

Due to the rising patient load and frequent readmissions, hospitals held a substantial market share in the clinical chemistry market. Additionally, growing government initiatives to support adequate diagnostic facilities that produce speedy results and enhance overall efficiency also plays a significant role in the market expansion for this segment.

Abbott Laboratories, Danaher Corporation, EKF Diagnostics Holdings PLC, Hitachi, HORIBA Ltd., Johnson & Johnson, Siemens Healthineers, Sysmex ADR, and Thermo Fisher Scientific are the leading players in the clinical chemistry market. To maintain their industrial position, these companies are concentrating on expansion strategies, including mergers and acquisitions.

AI and clinical chemistry

Artificial intelligence (AI) applications are an area of active investigation in clinical chemistry.

The AI models in healthcare have the potential to improve the precision and speed of personalized medicine for patients, in some cases helping to identify the best treatment or preventive care. Clinicians are already implementing these models in areas, such as early detection of sepsis and analyzing radiology images for diagnosis of prostate cancer and other conditions. It is a growing area of interest that laboratory medicine professionals should pay attention to, as data generated by laboratory testing is a major component incorporated into AI tools to generate clinical decisions.

Clinical AI and a subset of AI, known as machine learning (ML), can be used for tasks in a broad range of fields from precision medicine to population health. The main advantage is speed because these tools rely on computerized instead of manual tasks. There is a lot of interest in how to make AI/ML algorithms more advanced so they can predict more complex outcomes, such as response to cancer treatment or risk for adverse events from surgery.

Jason Armstrong

Scientific Content Creator,

Randox Laboratories Limited

Measurement vs calculation: sdLDL-C

Small dense LDL cholesterol is a form of LDL cholesterol which is smaller in size and higher in density than larger, more buoyant LDL-C molecules. sdLDL-C functions to deliver triglycerides and cholesterol to tissues. However, their smaller particle size makes them more permeable to the arterial wall resulting in increased atherogenic function with patients exhibiting elevated sdLDL-C levels having a 3-fold increased risk of Myocardial Infarction. Since its emergence as a causal ASCVD risk factor, new techniques have been developed for the quantification of sdLDL particles, however these methods have varying accuracy and precision.

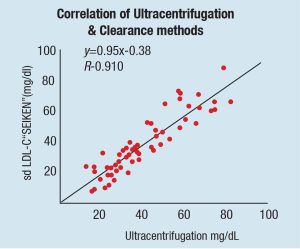

There are several methods which quantitate sdLDL concentration at varying efficacies, however, the gold standard is Ultracentrifugation. Other methods such as ELISA and NMR may be effective but require either expensive equipment or laborious test procedures. Automated clinical chemistry analysers provide clinicians with a simple effective strategy for quantifying sdLDL concentration and exist in two forms: Direct methods and calculation methods. While direct methods quantify the number of sdLDL particles directly, calculation methods determine sdLDL concentration by applying equations to test results which quantify another entity, often LDL-C which includes sdLDL-C and other LDL particles.

| Data displayed shows the positive bias of calculation methods for quantifying sdLDL-C in comparison with direct quantification methods. | |||||||||

| Quartile 1 | Quartile 2 | Quartile 3 | Quartile 4 | ||||||

| Cohort | sdLDL-C Analysis | mg/dL | HRadj (95% Cl) | mg/dL | HRadj (95% Cl) | mg/dL | HRadj (95% Cl) | mg/dL | HRadj (95% Cl) |

| All subject | Direct | <28.1 | 1.00 (ref) | 28.1–<39.3 | 1.12 (1.07-1.17) | 39.3–<54.2 | 1.27 (1.16-1.39) | 54.2–214.8 | 1.56 (1.31-1.85) |

| Calculated | <33.3 | 1.00 (ref) | 33.3–<42.0 | 1.06 (0.88-1.14) | 42.0–<51.8 | 1.11 (0.96-1.28) | 51.8–211.0 | 1.19 (0.19-1.51) | |

| Men | Direct | <29.6 | 1.00 (ref) | 29.6–<41.3 | 1.14 (1.07-1.21) | 41.3–<55.1 | 1.30 (1.14-1.47) | 55.1–151.8 | 1.57 (1.26-1.96) |

| Calculated | <33.3 | 1.00 (ref) | 33.3–<43.1 | 1.05 (0.94-1.16) | 42.1–<5.15 | 1.09 (0.90-1.32) | 51.5–149.1 | 1.15 (0.84-1.56) | |

| Women | Direct | <27.1 | 1.00 (ref) | 27.1–<37.5 | 1.11 (1.04-1.18) | 37.5–<53.0 | 1.26 (1.09-1.45) | 53.0–214.8 | 1.58 (1.19-2.08) |

| Calculated | <33.2 | 1.00 (ref) | 33.3–<41.9 | 0.95 (0.83-1.08) | 41.9–<52.1 | 0.91 (0.71-1.16) | 52.1–210.0 | 0.85 (0.56-1.29) | |

One study investigated the correlation between direct and calculated sdLDL-C and the ASCVD risk factors associated with both methods of detection. Schaefer et al. displayed a significant difference between direct and calculated sdLDL-C concentrations with a correlation coefficient of r2= 0.674.

Further analysis in this paper shows directly measured sdLDL-C provide significant additional information to ASCVD risk, whereas calculated methods did not. As the direct clearance method used in this analysis shows excellent correlation with the ultracentrifugation method, they suggest that calculated methods for the quantification of sdLDL-C are not as reliable as direct methods.

sdLDL-C is an important risk factor associated with ASCVD; therefore, accurate quantification is critical for clinicians and patients alike. Direct measurement methods seem to provide an approach allowing more accurate analysis of ASCVD risk. As technology and knowledge advances, other calculation methods for quantification of biomarkers may become obsolete, however, careful analysis and comparison of these methods is paramount.

Clinicians focus on understanding individual patients and their trajectories. But laboratorians have great skills in aggregating and processing data. A natural extension of delivering a lab result is to deliver a risk analysis or a likely diagnosis. That is really what all these predictive analytic tools are about.

Adoption commenced but progress is slow

There has been some adoption of AI and ML techniques in the laboratory setting, primarily in molecular pathology (such as in classification of central nervous system tumors by DNA methylation profiling) and digital pathology (like image analysis), but it has been going slowly. Predicting laboratory test values, improving laboratory utilization, automating laboratory processes, promoting precision laboratory test interpretation, and improving laboratory medicine information systems are some that give impressive accuracy.

Overall, AI/ML technology holds promise to leverage large amounts of medical data to create more personalized interpretations of test results, the paper said, such that the paradigm could shift from defining a normal hemoglobin level in general to defining one for a particular individual.

Chemistry and immunology laboratories are particularly well-suited to leverage ML because they generate large, highly structured data sets. Labor-intensive processes, used for interpretation and quality control of electrophoresis traces and mass spectra, could benefit from automation as the technology improves. Clinical chemistry laboratories also generate digital images – such as urine sediment analysis – that may be highly conducive to semi-automated analyses, given advances in computer vision.

Challenges abound

Laboratories still must face significant challenges before the technology can be used in a more widespread manner. These include the need to collect high-quality data from diverse populations and to manage costs associated with computational infrastructure and personnel to develop and update algorithms and software tools.

In this relatively new field, there also have been no guidelines on best practices to clinically validate the algorithms. The College of American Pathologists within the past year formed a committee to help establish laboratory standards for AI applications. Although it is still unclear what role federal and state regulators will take, the Food and Drug Administration has convened a committee to look into the AI-driven devices.

Lack of familiarity with the technology also can be a barrier to selecting appropriate programs for use. Some people rely on the vendor’s marketing information, which can be overstated. In that case, laboratory professionals can ask for help from someone else at their institution knowledgeable about the field who can aid in assessing these models.

Understanding the different tasks that AI could assist with can help in approaching some of the challenges. For people not as familiar with AI, trusting an AI model to do a diagnostic task that highly specialized staff or biologists are doing can present a big trust hurdle, versus incorporating AI for something that seems lower risk, such as assessing workflow for areas that could be optimized, or detecting patterns in test utilization that could be improved.

Another approach is to implement an AI program alongside a manual process, assessing its performance along the way, as a means to ease into using the program. One of the most impactful things that laboratorians can do today is to help make sure that the lab data that they’re generating is as robust as possible, because these AI tools rely on new training sets, and their performance is really only going to be as good as the training data sets they are given.

There are ethical considerations when using AI in medicine, too. One is data access and another is how to properly get consent from patients to include their data in larger pools. And, when the data is used, how can clinicians ensure they do not introduce additional biases? The models we produce are only as good as the data we collect. So if there are underlying biases in how we provide clinical care, those will potentially translate through to our models as well.

AI models also may not help if the data set used does not reflect the population served by a particular laboratory or health system. Understanding and validating the models for individual settings is something important to do, she noted.

“Our job is to ensure that whenever we do predictions for a particular patient that change the course of care, we are very convinced that the model is appropriate and it’s the best we can do at a particular point in time,” Ohno-Machado said, noting that models will need to be refreshed over time as new data and outcomes are collected.

Bridge to the future

In the future, AI will be incorporated directly into more devices and instruments, and laboratory directors might not be choosing standalone AI programs. Instead, AI features may be incorporated into larger software packages they are considering.

AI will help the clinicians to move forward with many more precision medicine-based approaches, and leverage a lot of biomedical knowledge that today does not make it into clinical AI and ML models. It will be ubiquitous to the point that if it is AI or some other type of method to generate, the results will not matter. With more and more data, the models tend to just get better. And the intention to have training data that is more diverse will mitigate some of the problems AI has had to date.